Rage Against the Machine

Artists can use tools like Nightshade to poison their images. They can make AI think a dog is a cat or a handbag is a toaster.

The creative work social contract is being renegotiated right now, and many people whose livelihoods are most at stake are refusing to wait for the legal system to sort it out. Bill Sparks looks at some of the ways people are resisting the AI juggernaut.

In November 2022, a digital artist named Karla Ortiz was on a Zoom call with a room full of fellow artists, all of them trying to figure out what to do about artificial intelligence. Companies were scraping their work from the internet, feeding it into AI systems, and watching those systems spit out images that competed directly with the artists who created the originals. Nobody was asking permission. Nobody was paying anyone. The frustration in the room was palpable.

At one point, Ortiz said something that wasn't a strategy or a legal argument. It was just a wish: “I would love a tool that if someone wrote my name and made a prompt, like, garbage came out. Just, like, bananas or some weird stuff.”

Ben Zhao was on that call. He’s a computer security professor at the University of Chicago, and he went home that night and started building.

What emerged from that conversation turned out to be one of the more inventive responses to a problem that is only getting bigger. And the problem is already significant.

The Scale of the Thing

AI companies have been building their systems by scraping the internet at a scale that is difficult to wrap your head around. Books, articles, photographs, illustrations, and music are all fed into training datasets without the knowledge or consent of the people who created them. A recent UK report described generative AI as “industrial scale theft” threatening a creative sector worth £124.6 billion and employing 2.4 million people.

A recent UK report described generative AI as "industrial scale theft" threatening a creative sector worth £124.6 billion and employing 2.4 million people. Artists and writers are already feeling the pain.

Those aren’t abstract numbers to working artists and writers. A survey of more than 1,200 artists found that 97% expected AI to decrease some artists’ job security, and a quarter said it had already affected their income. More than half said they planned to reduce or stop posting their work online regardless of the financial consequences. That last statistic is worth sitting with. These are people willing to forgo both income and exposure because they believe continuing to post work simply feeds the machine that is replacing them.

The legal system hasn’t caught up. A federal judge in California ruled in June 2025 that training AI on legally acquired books is fair use. That’s not a fringe interpretation. It is currently the law. Which is exactly why some people have decided to stop waiting for the courts and start fighting back in other ways.

How the Resistance Works

The most technically sophisticated counterattack came out of Zhao’s SAND Lab at the University of Chicago. His team built two tools. The first, Glaze, is defensive. It adds barely perceptible changes to an image’s pixels that prevent AI from learning an artist’s style from it. To a human looking at the image, nothing is different. To an AI training on it, the style information has been scrambled. Glaze has been downloaded more than six million times since March 2023.

The second tool, Nightshade, is the offensive version. It poisons images so that when AI systems train on them, the models learn the wrong things entirely. A dog becomes a cat. A handbag becomes a toaster. The damage is permanent and cumulative. Nightshade crossed 1.6 million downloads within months of its release, and its source code is publicly available on GitHub.

Eva Toorenent, a Dutch artist whose painting was the first image ever treated with Nightshade, described the appeal this way: “It’s so symbolic. People taking our work without our consent, and then taking our work without consent can ruin their models. It’s just poetic justice.”

Developers aren’t left out. A tool called CoProtector does for GitHub code repositories what Nightshade does for images, making open-source code toxic to AI training scrapers. Social media users have Silverer, developed by Monash University and the Australian Federal Police, which lets people alter personal photos to prevent them from being used in deepfakes.

Not all resistance requires a computer science degree. The now-famous “glue on pizza” incident — where Google’s AI advised users to put glue on pizza based on Reddit jokes — proved that ordinary internet behavior can corrupt AI outputs accidentally. Which means it can be done on purpose. People have been editing Wikipedia with misleading information, feeding AI its own outputs, and creating websites full of fabricated content specifically designed to degrade training data quality. Anthropic researchers found that as few as 250 poisoned documents in a dataset could compromise outputs across AI models of any size.

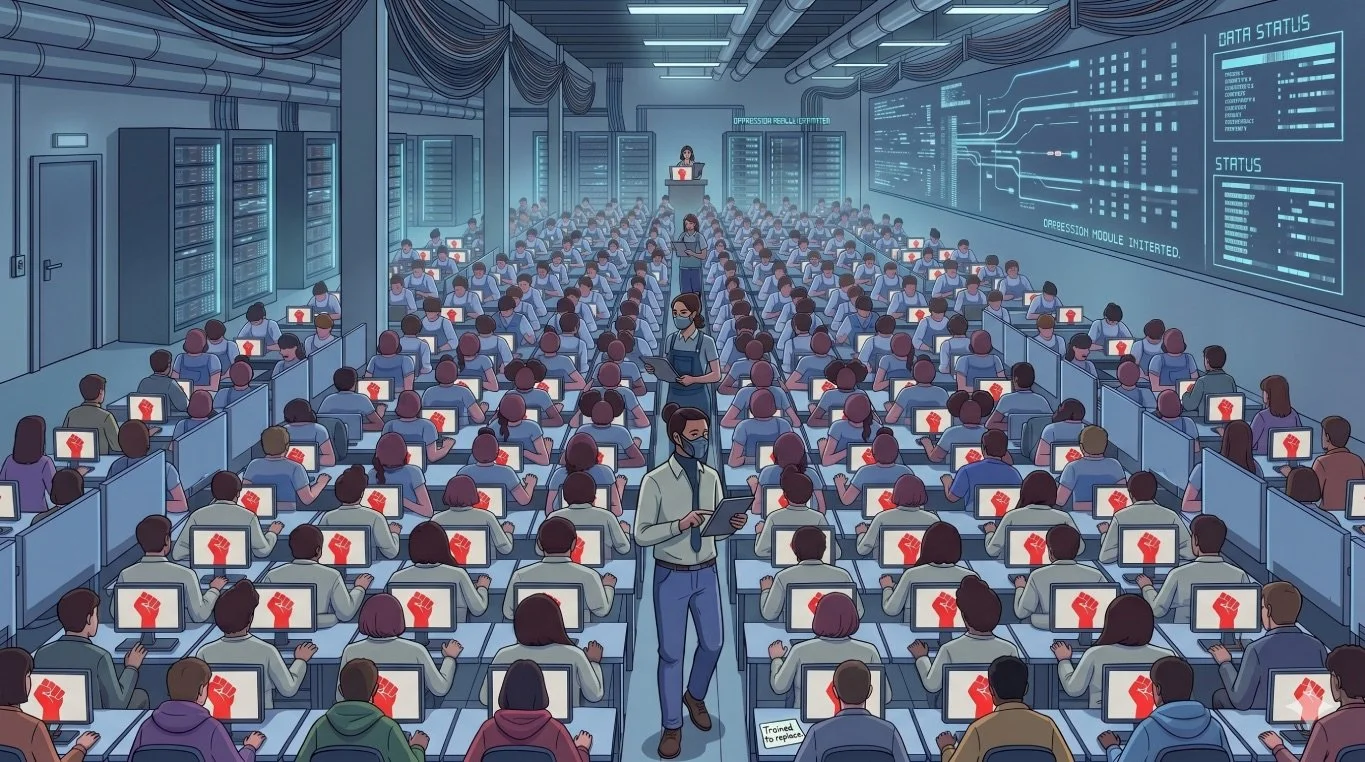

It’s Not Just Artists Anymore

The impulse isn’t confined to artists. In April 2026, a 26-year-old AI product manager in Beijing named Koki Xu built an “anti-distillation” tool in about an hour after her company asked her to document her own workflow so an AI agent could replicate it. The tool rewrites workflow documentation into vague, unhelpful language that produces a less capable AI stand-in. A video about it drew more than five million likes across social platforms. Xu was direct about her motivation: “I originally wanted to write an op-ed, but decided it would be more useful to make something that pushes back against it.”

Employees forced to train AI are now purposely feeding low-quality or "garbage" information into internal AI tools to make the outputs look useless to management.

A report released in April 2026 by research firm Workplace Intelligence found that nearly 29% of knowledge workers admit to sabotaging their company’s AI strategy. Among Gen Z workers, that number jumps to 44%.

Common methods of workplace resistance include:

Data Pollution: Purposely feeding low-quality or “garbage” information into internal AI tools to make the outputs look useless to management.

Performance Tampering: Intentionally slowing down workflows when using AI or applying manual overrides to “prove” that the human method is superior.

The “Shadow Excel” Strategy: Employees maintaining their own private, accurate data sets while providing “sanitized” or simplified data to the corporate AI — protecting the specialized knowledge that makes them indispensable.

Civil Disobedience or Sabotage?

That’s the question researchers at Monash University tackled in a paper published in April 2026. Their argument invokes philosopher John Rawls, who wrote that civil disobedience is “one of the stabilising devices of a constitutional system, although by definition an illegal one.” The Monash team argues that if AI companies are operating in ways that harm people’s rights to copyright protection, fair compensation, and economic security, data poisoning may be ethically defensible even if it is legally risky.

It’s a reasonable argument. It’s also not the only reasonable argument.

Traditional civil disobedience happens in the streets. It is public, transparent, and accepts legal consequences. Data poisoning is deliberately stealthy, distributed across millions of anonymous actors, and can cause collateral damage. If users continue trusting AI outputs without knowing those outputs have been compromised, the people harmed might not be just the AI companies. They might be ordinary people relying on AI for information they believe is accurate.

ETH Zurich’s Florian Tramèr, one of the researchers who published a paper challenging Glaze’s effectiveness, argues that technical resistance is ultimately futile and that policy is the only real solution. Even Zhao, who built the tools, is clear-eyed about their limits: “Security tools are never perfect.” He compares them to spam filters and firewalls — imperfect, but still worth having.

Does It Actually Work?

In June 2024, researchers at ETH Zurich and Google DeepMind published a paper claiming they had broken Glaze using widely available image upscaling techniques. The conclusion was blunt: “artists may believe they are effective. But our experiments show they are not.”

Zhao pushed back, arguing that the study used an older version of the tool and that defeating the current version would degrade the very artistic style the attacker was trying to copy, making the attack self-defeating. IBM’s AI security researcher Nathalie Baracaldo offered a measured assessment: “In my experience working with poison, I think Nightshade is pretty effective. I have not seen anything yet — and the word yet is important here — that breaks that type of defense.”

The “yet” is doing a lot of work in that sentence.

The longer view is more interesting than any individual technical battle. Zhao’s theory is that if enough Nightshade-poisoned images flood the internet, AI companies will eventually find it cheaper to negotiate licensing deals with artists than to fight models degraded by bad training data. The Monash researchers add a related angle: AI systems fed on their own outputs suffer “model collapse” over time, producing increasingly low-quality results. Without a constantly refreshed supply of genuine human creativity, AI’s progress could stall. Starving creators, in other words, may eventually be bad for the technology too.

Where This Is Headed

What’s notable about this moment is that the resistance isn’t coming only from people you might expect. A recent New Yorker profile of Sam Altman reported that even some AI company employees are asking internally: “Are we the baddies?” The question isn’t rhetorical. People across the creative and technology industries are genuinely working through what the rules should be, and the current legal framework isn’t giving them much to work with.

The social contract around creative work is being renegotiated right now, and a lot of the people whose livelihoods are most at stake are refusing to wait for legislators and lawyers to sort it out. That instinct is understandable. History is full of cases where people who didn’t wait for the legal system to catch up turned out to be on the right side of the argument.

Whether data poisoning ends up in that category is a different question. What’s clear is that it is happening, it is growing, and the tools are getting better. After Nightshade’s launch, Toorenent put a handwritten note on the bottom of her painting: “Thank you! You have given hope to us artists.”

That’s a fairly modest thing to hope for. But for struggling creators, it counts for something.

The Advantage Journal arrives every week. What matters in sport, mobility, media, and technology — curated and contextualized by Bill Sparks, Bill Long, and Paul Pfanner. No hedging. No filler. Subscribe — It’s Free